Can Large Language Models Reason and Plan?

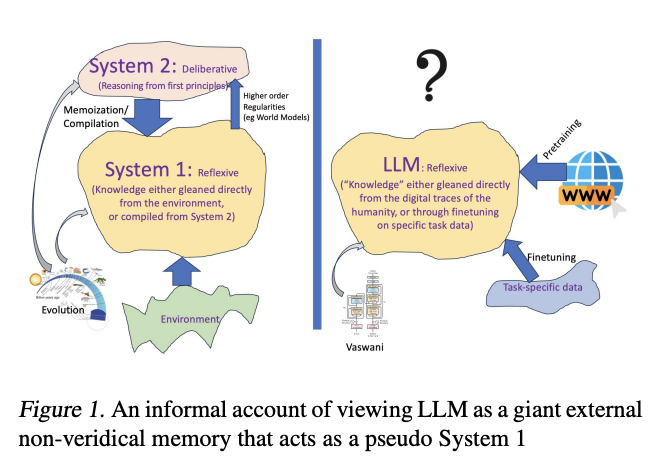

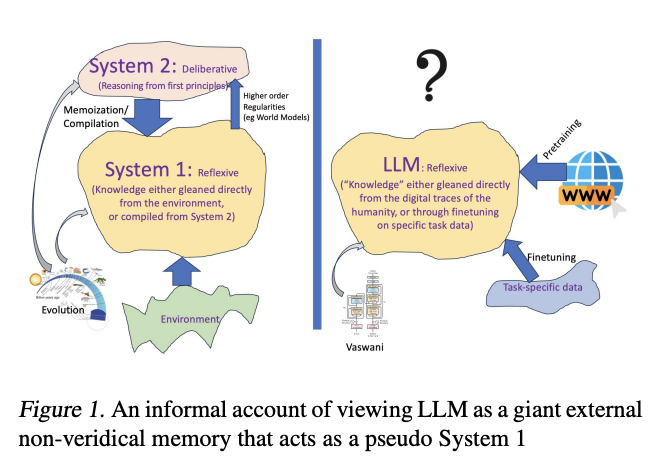

In summary, “Can Large Language Models Reason and Plan?” provides a critical examination of the planning and reasoning capabilities of LLMs, highlighting the limitations of these models in performing tasks that require more than approximate retrieval of information.

The study suggests that while LLMs can be valuable tools in generating ideas and potential solutions, their ability to autonomously engage in principled reasoning and planning remains questionable. The highlighted LLM-Modulo framework offers a promising direction for leveraging the strengths of LLMs while ensuring the reliability of the solutions through external verification.

Evaluation Techniques

To assess the planning abilities of LLMs, the research group conducted evaluations using planning instances derived from domains typically used in the International Planning Competition (IPC), including the well-known Blocks World. These tests were initially performed on GPT-3 and later repeated on GPT-3.5 and GPT-4 to observe any improvements across versions.

The study aimed to distinguish between the models’ ability to retrieve approximate answers from their training data and their capacity for genuine planning. A novel approach involved obfuscating the names of actions and objects in planning problems to reduce the effectiveness of approximate retrieval, aiming to test the models’ planning capabilities more rigorously.

Results

The results indicated a modest improvement in the accuracy of generated plans from GPT-3 to GPT-3.5 and GPT-4, with GPT-4 achieving 30% empirical accuracy in the Blocks World domain. However, this accuracy dropped significantly when the names in planning problems were obfuscated, suggesting that the models’ improved performance might be more attributable to enhanced retrieval abilities rather than genuine planning capabilities. The paper also explores two popular techniques for improving planning performance: fine-tuning the LLM on planning problems and prompting the LLM with hints or suggestions. However, these methods did not conclusively prove that LLMs are capable of autonomous planning.

Next Steps

The paper advocates for a framework called “LLM-Modulo,” which combines the idea generation capabilities of LLMs with external model-based plan verifiers. This approach leverages LLMs as a source of potential solutions that are then validated or refined by external verifiers, offering a more reliable method for utilizing LLMs in planning and reasoning tasks.

The paper concludes that while LLMs exhibit remarkable abilities in generating ideas and approximating knowledge, there is no compelling evidence to suggest they are capable of principled reasoning or planning as traditionally understood.